The Tool Selection Problem: Why AI Agents Call The Wrong Tool And How To Fix It

Tool descriptions are not documentation. They are instructions. Here is what four recent papers and a minimal agent experiment reveal about why agents pick the wrong tool in production.

TLDR: AI agent tool calling fails for predictable and fixable reasons. The standard debugging instinct — fix the system prompt — targets the wrong layer entirely. The model’s selection decision is based on the description text, not the system prompt. This blog maps four failure modes, a minimal-agent experiment that exposed their mechanics, and the description patterns that fix each one. If you build agents, the tool description is your most important engineering surface.

On τ-bench, a standard AI agent evaluation benchmark, well-trained language models succeed on roughly 25% of tasks. The majority of failures trace back to tool selection errors, not execution errors.

So, how does it happen?

The model picks the wrong function. Not because it misunderstood the user’s intent, but because the descriptions of two tools were close enough that the selection signal was ambiguous. This is a description problem, not a model problem. And it has a description-level fix.

How the Model Decides Which Tool to Call

When a language model processes a tool-calling request, it reads each tool’s description and computes which function best matches the current context. The decision runs against three signals, in this order:

Description text.

Parameter names.

Tool ordering in the context window.

The system prompt, where most teams invest their debugging effort, barely factors in at selection time. Anthropic’s define-tools documentation states this as such: the description is “by far the most important factor in tool performance.” Anthropic recommends at least three to four sentences per tool, explaining what it does, when to use it, and, critically, when not to use it. Most production tool definitions are one sentence long.

But why is it important?

A 2026 study on tool calling interpretability found that tool identity is linearly readable from the model’s internal representations before the first output token appears. Meaning, the model has already decided which tool to call before it writes a single word of its response.

When you see a wrong tool call in your logs, that decision was made a step earlier. Patching the system prompt changes how the task is framed, but it does not touch the signal the model used to pick the tool.

What Causes Agents to Pick the Wrong Tool

Four failure modes account for the large majority of selection errors in production. It is worth naming each one clearly, because the fix for each is different.

1. Ambiguous overlap

Two tools serve similar purposes, but their descriptions do not clearly delineate their boundaries. The model selects inconsistently between them because both descriptions are compatible with the same user request. Research on rewriting tool descriptions for reliability found that this is especially common with domain-specific APIs, where the functional difference between two tools is narrow but the consequence of calling the wrong one is significant.

2. Missing negative constraints

The description explains what a tool does, but not when to avoid calling it. Without an explicit boundary, the model treats any plausible overlap as a valid trigger. Anthropic’s tooling guidance lists “when it should not be used” as a required part of every well-formed tool description. Most teams skip it entirely.

3. Misleading parameter names

Parameter names carry semantic weight independently of the description text. A parameter named query invites broader interpretation than one named search_term. A parameter named message suggests a different trigger than user_input, even when the underlying function is identical. Names are part of the selection signal, whether you treat them that way or not.

4. Indiscriminate calling

The model invokes tools to answer queries it can answer based on its own knowledge. A May 2026 paper on tool-call necessity found that agents make unnecessary tool calls in nearly half of queries where a direct answer is available, adding latency and cost with no accuracy benefit.

One more thing to notice here is that these failure modes compound. AgentProp-Bench, a 2026 benchmark for tool-using agents, found that a parameter-level selection error cascades to a wrong final answer approximately 62% of the time. The wrong tool call is rarely the end of the failure. It is the start of it.

What Building a Minimal Agent Taught Me About Tool Selection

I wanted to understand selection failures at the mechanism level, so I spent time building a minimal coding agent using Pi, a terminal agent developed by Mario Zechner. Pi ships with four tools: read, write, edit, and bash. Total tool definitions sit under 1,000 tokens combined.

The minimal surface made the mechanics visible in a way that production agents with fifteen or twenty tools simply cannot. With four clearly distinct tools, the model consistently called the correct one. Each description was narrow enough that no two tools were plausible candidates for the same request. There was no ambiguity to resolve, so none occurred.

Then I added a fifth tool: a file search function whose description partially overlapped with bash. Selection degraded immediately. The model started calling the search tool even when bash was the right choice. This happened because both descriptions were compatible with the user’s request at the surface level. The model was not broken. The descriptions were.

Zechner’s design philosophy for Pi centers on exactly this point. Context control is the primary lever, not model capability. When descriptions are distinct and scoped, the selection signal is clean. When they overlap, the model resolves the ambiguity arbitrarily. What you see on the outside is a flaky agent.

This is the same principle Merve Noyan at Hugging Face describes as the “skills” framing. Tools designed with a single, non-overlapping trigger condition succeed consistently. Tools designed as general-purpose API wrappers fail in proportion to how much they overlap.

I want to be clear, though.

This is practitioner-observed evidence, not a controlled study. But the pattern matches exactly what the 2026 papers describe, and it is reproducible in an afternoon with any minimal agent harness.

Description Patterns That Fix Each Failure Mode

Each failure mode has a direct fix at the description level. None of them requires a better model.

1. Fix ambiguous overlap

Add a disambiguation sentence to each affected tool. Something like: “Use this tool when X. Use [other tool name] when Y.” Make the boundary explicit in the description rather than expecting the model to infer it from context.

2. Fix missing negative constraints

Add one exclusion sentence per tool: “Do not call this tool when the user is asking about X. Use [specific alternative] instead.”

Anthropic’s engineering blog describes refinements alone lifted Claude Sonnet to the SWE-bench state-of-the-art. No model changes. Just better descriptions.

3. Fix misleading parameter names

Rename parameters to match their actual scope. If a parameter only accepts structured record identifiers, name it record_id, not input or query. The name constrains interpretation. This is a one-line change with measurable impact on selection accuracy.

4. Fix indiscriminate calling

Add an explicit capability boundary to the description: “Call this tool only when the answer cannot be determined from conversation context alone.” This reduces unnecessary calls without suppressing the ones that are genuinely needed.

When a tool list grows beyond ten to twelve tools, the architectural fix is to distribute them across specialized sub-agents rather than load all of them into one context window. Each agent gets a narrow, coherent tool set. Selection accuracy improves because the candidate pool is smaller and semantically distinct. This is one of the core reasons single-agent architectures break down under real task complexity.

The description fix and the architectural fix are not alternatives. They work at different scales of the same problem.

For more on the layers that sit around selection, see the Labs pieces on building effective tool-calling functions and running tool-using agents reliably in production.

Building a Tool Selection Eval Before You Ship

Functional tests verify that a tool executes correctly when called. They do not check whether the model selected the correct tool to begin with. These are different failure modes, and only one of them typically gets a dedicated eval in most agent development workflows.

A minimal tool selection eval needs three things:

A fixed sample size or set of representative user inputs/queries. Twenty to thirty is enough to start.

The expected tool call for each input.

A pass/fail check comparing actual model output against the expected tool name and, where relevant, the expected parameter values.

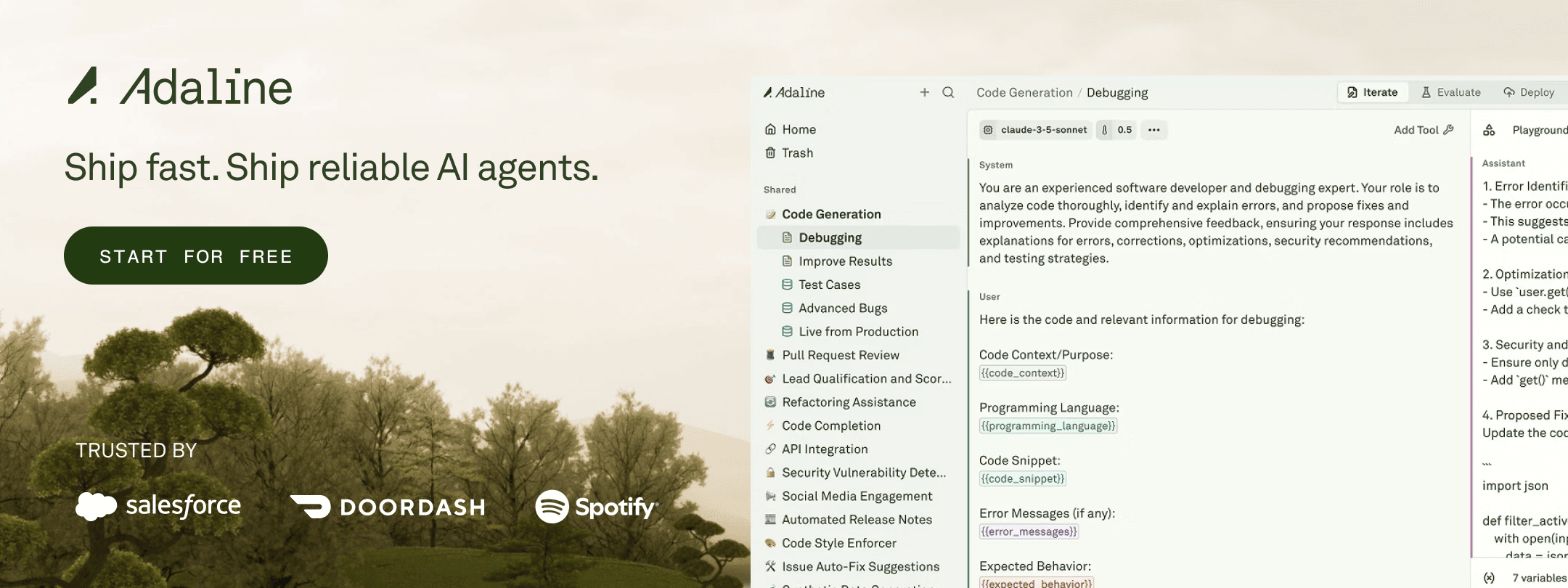

Run it every time you change a description, add a tool, or switch models. Selection behavior shifts across versions, and catching those regressions early is the point. Adaline’s evaluate loop is built for exactly this: running selection evals against your agent’s live tool configuration and surfacing regressions before they ship.

Wrong tool calls are a description problem, not a reasoning problem. The model is following the signals you gave it, and those signals are ambiguous. Write cleaner descriptions, add explicit exclusion boundaries, and build a selection eval before you ship. The model you have is capable enough. The bottleneck is the interface you gave it.