Why AI Took Coding Before Everything Else

Code has a built-in feedback loop that law, strategy, and design don't. That single property explains the automation sequence and maps which parts of the PM role automate next.

TLDR: AI automated coding before law, design, or strategy because code has a built-in feedback loop. Meaning, you can run tests and know immediately whether it worked. That property, which barely exists anywhere else in knowledge work, is why autonomous AI iteration was possible in software first. Understanding that logic tells you what to automate next and which parts of the PM role hold out longest. What has changed is already reshaping how engineers work, what cognitive debt accumulates inside fast-moving teams, and what product leadership actually means when execution is no longer the constraint.

The most useful way to think about a large language model is this. It has read every textbook ever published. It executes tasks instantly. And it forgets everything that happened before the current conversation. It gives confident answers to questions it genuinely cannot answer. The confidence is the problem.

Product leaders have spent careers managing exactly this kind of person. In this case, it is the junior hire who executes fast but needs context, direction, and verification. The thing that just changed is that this person now writes all the code.

This article explains why that happened — why coding automated first, before law, before strategy, before many other domains. It traces what that sequence reveals about where product leaders’ attention needs to go next.

Why AI Came for Coders First

The explanation is not that code is simpler than other knowledge work. The explanation is that code has a built-in verification loop that almost no other professional domain has. That loop made AI possible in software before anywhere else.

When a model generates code, a test suite runs. The code either works or it doesn’t. That binary result tells the model exactly where it stands, without a human in the loop. The model generates, encounters a failure, reads the error message, revises, and runs again. This inner cycle closes on its own.

The same property does not exist in law.

As Simon Willison put it: “If you’re a lawyer, you’re screwed, right?”

A brief written by a model may be fluent, well-structured, and completely wrong about precedent, and no automated test can catch it. There is no failing test suite for a hallucinated citation. The error surfaces in court, months later, where the damage is real.

The same applies to medical reasoning, strategic advice, and most of what knowledge workers produce. Whether the output is correct requires a human who already understands the domain.

This distinction -- verifiable output versus output that needs expert judgment to check -- is the most important frame for thinking about the automation timeline:

The fastest-automated domains are those where correctness can be tested automatically.

Domains that hold out longest are those where correctness is ambiguous or can only be judged by someone who already knows the problem deeply.

For product leaders, this maps directly onto your own work. Features with measurable success signals will automate faster:

Conversion rates, error rates, and latency -- trackable, testable, automatable.

Work requiring judgment about ambiguous value holds out longest:

Deciding which roadmap item matters.

Aligning stakeholders around competing priorities.

Judging which user signal is real versus noise.

Verifiability is a strategic concept, and knowing which of your responsibilities falls into which bucket is now a planning skill.

The November 2025 Inflection

What changed and why that inflection matters to us?

November 2025 was not a moment of gradual improvement. It was a threshold crossing.

Models that had only handled simple, contained tasks suddenly became capable of working through complex, multi-file, deeply connected problems. Single files and narrow scope were no longer the ceiling. The models had crossed an invisible capability line where a whole new class of problems became solvable.

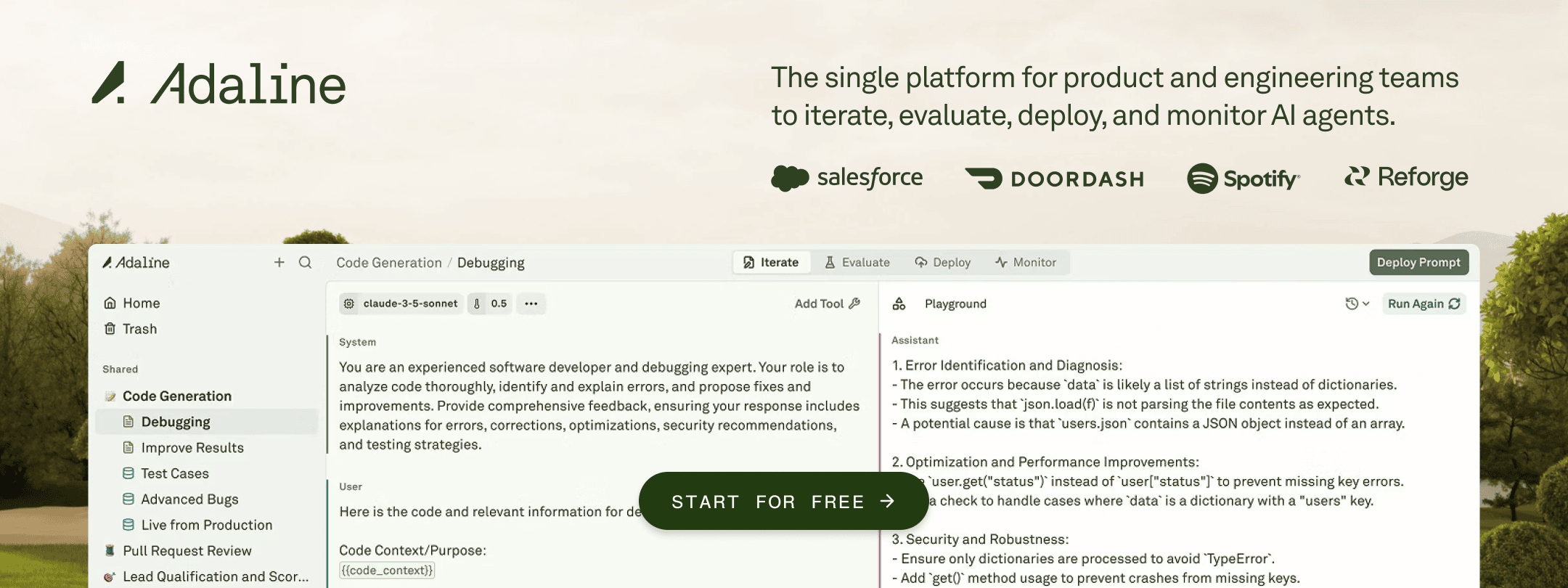

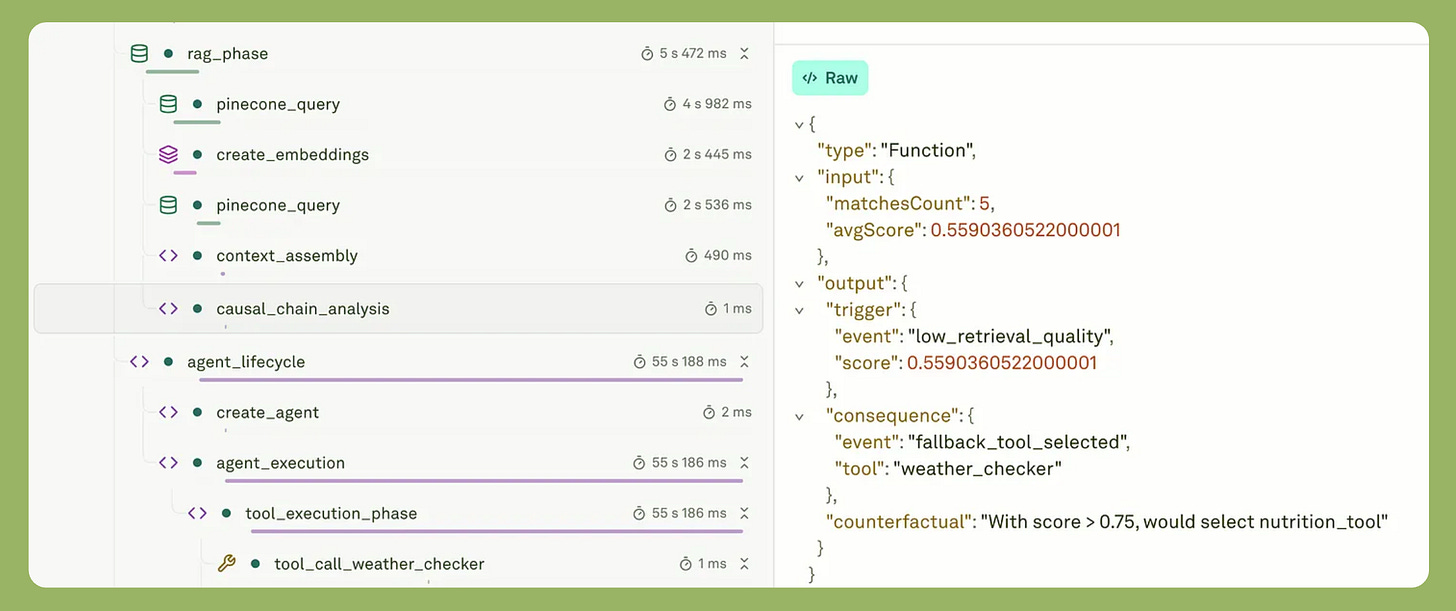

The clearest evidence came from inside the team’s building, these tools.

Boris Cherny, who created Claude Code at Anthropic, has not written a line of code by hand since November 2025. Every line in every pull request is written by the model. He ships ten to thirty pull requests a day. His contribution is not producing code; it is directing the agent and verifying its output.

For product leaders, the significance is not the output volume; it is what that volume implies about how engineers now experience their own job.

The mental model changed from “I write code, the model helps” to “I direct the agent, I verify the output.”

Engineers now spend most of their time on:

Reviewing model output for correctness and coherence.

Writing specifications precise enough for agents to act on.

Catching failures before they reach production.

They need more from product leadership as a result. This includes more precise direction, faster feedback cycles, and clearer success criteria. That need arrived ahead of most product roadmaps.

Most organizations are still structured for a world where the bottleneck was how fast engineers could write code. That bottleneck no longer exists. The constraint that replaced it is less visible, and it is already accumulating inside the teams that have moved fastest.

Cognitive Debt: The Hidden Cost Nobody’s Managing

There is a cost accumulating in engineering organizations right now that is not showing up on any dashboard: cognitive debt.

It is distinct from technical debt, and the distinction matters specifically for product leaders.

Technical debt is a code quality problem — poor architecture, shortcuts taken under pressure, messy implementations that need cleaning up later. Teams have managed this for decades.

Cognitive debt is different. Cognitive debt is a comprehension problem. It means the team has shipped something they cannot reason about.

For instance, a developer vibes-codes a feature in an afternoon. The feature works, passes tests, and ships on schedule. By every visible metric, the sprint was successful. But nobody on the team can predict what breaks when the next feature touches the same codebase.

Nobody can explain why the implementation made the choices it made. The shared mental model of the system — how it works and why — has degraded faster than the code itself.

Research into AI-assisted development teams documented exactly this pattern: teams hit a wall mid-project, unable to make simple changes without breaking something unexpected. The real problem was not code quality; it was that no one could explain why key design decisions had been made. They had accumulated cognitive debt faster than technical debt, and it paralyzed them.

Product managers feel cognitive debt first. It shows up as:

Estimates that consistently miss.

Regressions with no clear cause.

Features that cannot be extended without a full rebuild.

This is why observability stops being an engineering cost and becomes a product input. Trace data, eval systems, and production logs are how a product leader keeps enough understanding of a fast-moving, AI-written system to make planning honest.

The PM who reads what the product is actually doing in production is managing cognitive debt. The PM who only reviews finished features is not.

What Design’s Collapse Reveals About the Whole Stack

The compression happening in engineering is not isolated. It is happening across every function simultaneously, and design is the clearest case study.

Jenny Wen, who leads design for Claude at Anthropic and was previously Director of Design at Figma, documented this compression directly.

A few years ago, 60-70 percent of her team’s time went into mocking and prototyping. That number is now 30-40 percent. That recovered time went into working directly alongside engineers, i.e., polishing implementations as they were built, doing the last-mile work the old handoff model assumed someone else would handle.

In other words, execution compressed, and the role compressed with it.

Her Hatch Conference keynote conveys a deeper point: in a world where anyone can build anything quickly, the scarce skill is no longer execution — it is curation.

And it is turning out to be true.

Choosing what to build matters more than being able to build it. And because building in the wrong direction now costs days instead of months, the PM’s old job of gating engineering with a complete spec matters less. The scarce judgment is upstream: which directions are worth exploring at all.

Two insights from this shift reach beyond design.

First, non-deterministic products break the specification model.

You cannot write a complete spec for an AI feature because the product’s behavior is not fixed; it is a range. What users experience depends on the model, the prompt, and the context, which you could not have anticipated in advance.

A PM writes acceptance criteria for a summarization feature: three sentences, neutral tone, key date included.

The model produces a four-sentence summary in active voice that users find more useful than the spec required. The PRD was right about the goal and wrong about every constraint.

That is what structural mismatch looks like in practice.

Specification used to come before execution. Now they run in parallel, and the PM’s job is direction, not permission.

Second, the vision horizon has collapsed.

The two-to-five-year product roadmap is obsolete for teams running at AI execution speed. What replaces it is a three- to six-month directional prototype. It has to be concrete enough to keep teams pointed at the same thing and short-term enough to be revised when model capabilities shift.

Product planning built on annual cycles is misaligned with teams that ship daily. The planning unit needs to compress to match the execution unit, or the roadmap becomes fiction nobody trusts. That directional prototype is now the PM’s primary planning artifact. It is not a detailed spec and not an annual roadmap. But it is a direction concrete enough to keep fast-moving teams aligned and short enough to stay honest.

Where the PM’s Job Shifts First

These are behavioral changes, grounded in what the evidence above actually shows.

Build for the model’s timeline, not yours.

The principle is simple: design for where the model will be in six months, not where it is today. The capability ceiling rises every quarter. Features that feel out of reach for AI execution right now will be routine within two planning cycles. Roadmaps that treat current AI capabilities as fixed points will be wrong by the time they ship.

Shift your verification energy up the stack.

Engineers now spend more time reviewing model output than writing code. Your attention should move too — from reviewing shipped features to understanding what your team actually comprehends about what was built. The cognitive debt frame makes this concrete.

Your job is not just to catch bad output; it is to maintain enough shared understanding of the system so that planning stays honest. The PM who can explain how the system works, not just what it does, is the PM whose estimates hold up.

Treat latent demand as a real-time signal.

With AI products, the signal of what users actually want appears in production before it appears in research. Users encounter non-deterministic behavior and improvise workarounds in real time, and those workarounds are data.

With language model products, you discover use cases by watching people use them, not by specifying them in advance. The PM who builds this habit — reading trace data, support patterns, and user workarounds regularly — will identify the next right feature before a formal research cycle has time to name it.

Related: AI took coding first, which means coding agents are also the furthest along in terms of what good evaluation looks like. The full evaluation framework lives here: How To Evaluate Coding Agents In Production.

Closing

The weird, overconfident intern who has read every textbook can now write all the code. That changes execution permanently.

But what does not change is the judgment layer. That layer is now visible in a way it has never been before, precisely because execution has automated around it.

The intern cannot:

Decide what is worth building.

Know when a system that has no memory of understanding is about to fail in production.

Read the signal in a user’s workaround that the product should have been built differently.

Hold a vision long enough to keep a fast-moving team pointed at the same thing across a quarter.

Those are product skills. The execution layer has been automated. Judgment is the job.