How To Design AI Features For Nondeterminism

Why variance, drift, and reasoning failures are not engineering problems, and how to design around them before you ship.

TLDR: Nondeterminism is not an edge case in LLM-powered products: it is the default. This blog defines the three types of production failures: output variance, behavioral drift, and reasoning-level failure. The blog also diagnoses the three design failures that cause damage and walks through how to write a spec for a probabilistic feature. Essentially, shifting from expected output to acceptance criteria, from test cases to test distributions, and from “works” to "fails by design." If your AI PRD lacks an acceptance threshold section, it is not yet an AI PRD. Reliable AI features in 2026 are not built by teams with the best models. They are built by teams who designed for the day the model behaved unexpectedly.

The feature shipped cleanly. It passed QA, cleared stakeholder review, and ran without incident in staging. But three days after launch, a user forwarded a screenshot with a support ticket.

The AI had returned something the team could not explain. The logs showed nothing wrong. It was just different from anything it had produced before. When the engineer pulled the logs, everything was proper: status 200, latency normal, token count within range, no exception anywhere in the stack.

The model had simply behaved differently. That is not a bug. It is a design problem or a consequence of the probabilistic nature of AI. And until you or the team accepts that framing, every audit will lead to the wrong conclusion.

What Nondeterminism Actually Means for Product Teams

Here are three things that you, as a product leader, should be familiar with.

Output Variance: It is the most familiar. The same input, run twice against the same model, produces two different outputs. In summarisation tasks, copy generation, and classification, this is not an edge case. It is the default behavior of every probabilistic system. Many of us know it exists, but almost none of us design for it deliberately.

Behavioral Drift: It is the one that blindsides teams after launch. A feature works correctly at release, and a few weeks later, something is off with no code changes anywhere. These can be due to a model update, a shift in user input patterns, or a prompt encountering inputs it was never tested against, which can all trigger it. The team learns from user complaints, not from its own monitoring.

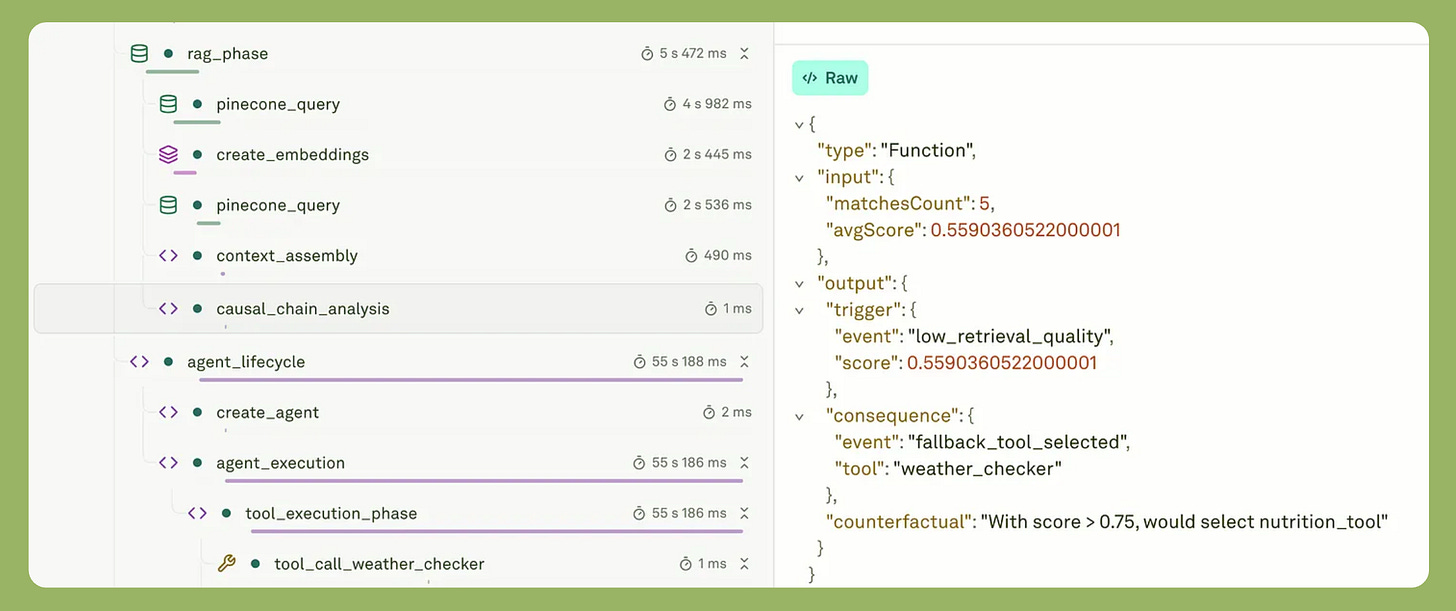

Reasoning-Level Failure is the hardest to catch because it produces no visible error. Our blog on Observability vs. Monitoring for Agentic AI describes this precisely: “retrieval works, tool calls complete, the model responds, but the combination of those steps produces a result that is wrong for the actual task. Monitoring shows all green. [But] the product fails.”

Nondeterminism is not a bug to fix. It is a constraint to design around, just as great product teams design around latency, mobile screen size, or network reliability.

Why Agents and Modern Models Make This Harder

A single nondeterministic call is manageable. An agent making sequential tool calls compounds the problem at every step. One failed retrieval can cascade into four downstream failures. From wrong tool selection to incomplete data to confabulated gap-filling to a correction loop.

You cannot write alerts for failure states you have never seen before. The blast radius of nondeterminism is proportional to agent autonomy.

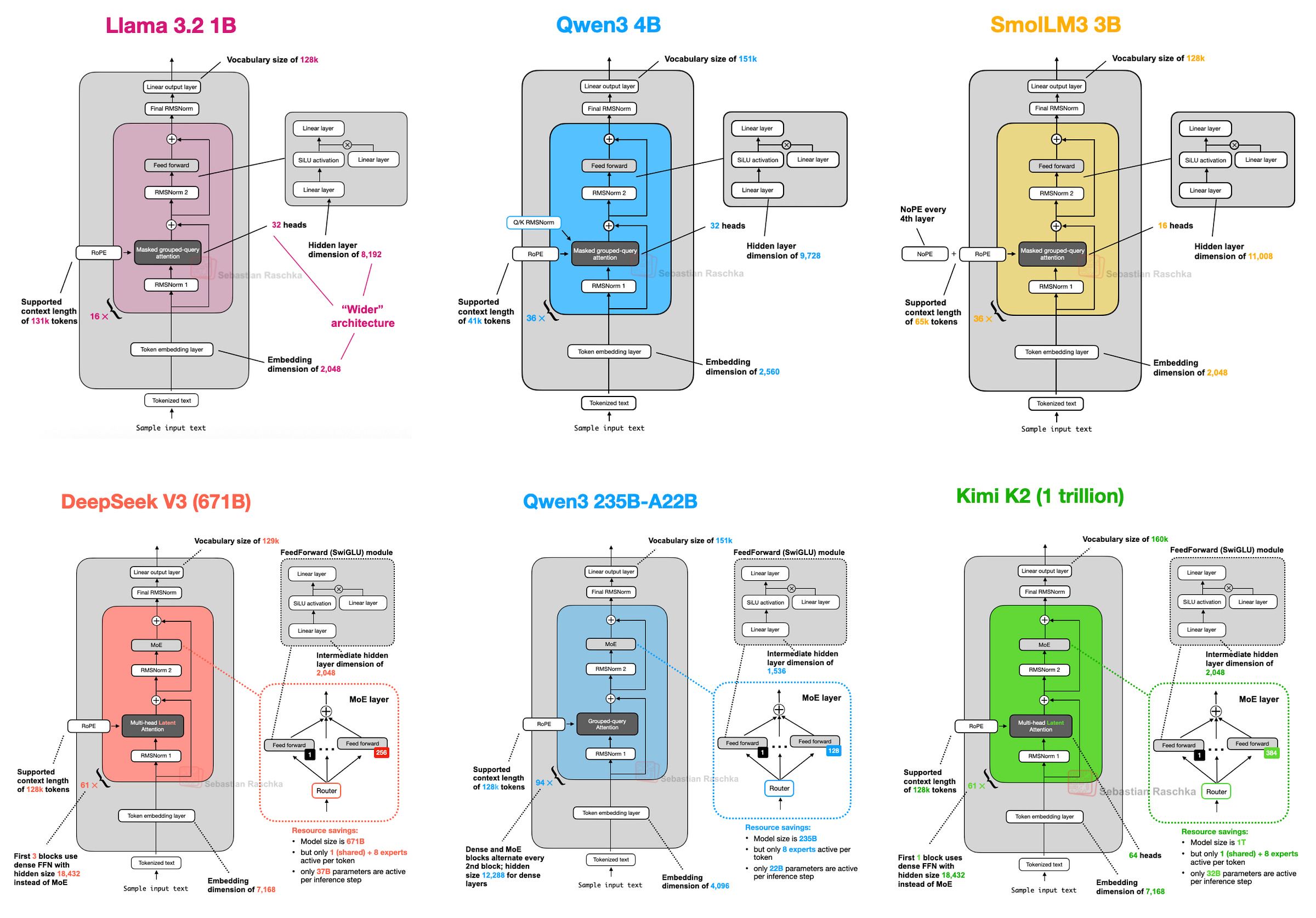

Modern model architecture adds a layer that most product leaders do not account for. Mixture-of-Experts models like Qwen3, GLM-4.5, and DeepSeek V3 do not activate all of their parameters for every inference step. A routing mechanism selects a small subset of active experts per token. Sebastian Raschka’s Big LLM Architecture Comparison shows that DeepSeek V3 activates roughly 37 billion of its 671 billion parameters per step, because just 9 of its 256 experts activate at a time.

That means, two nearly identical prompts can route to different expert combinations and produce meaningfully different outputs. This is architecture-level variance. It is not configurable.

Reasoning models add a third dimension.

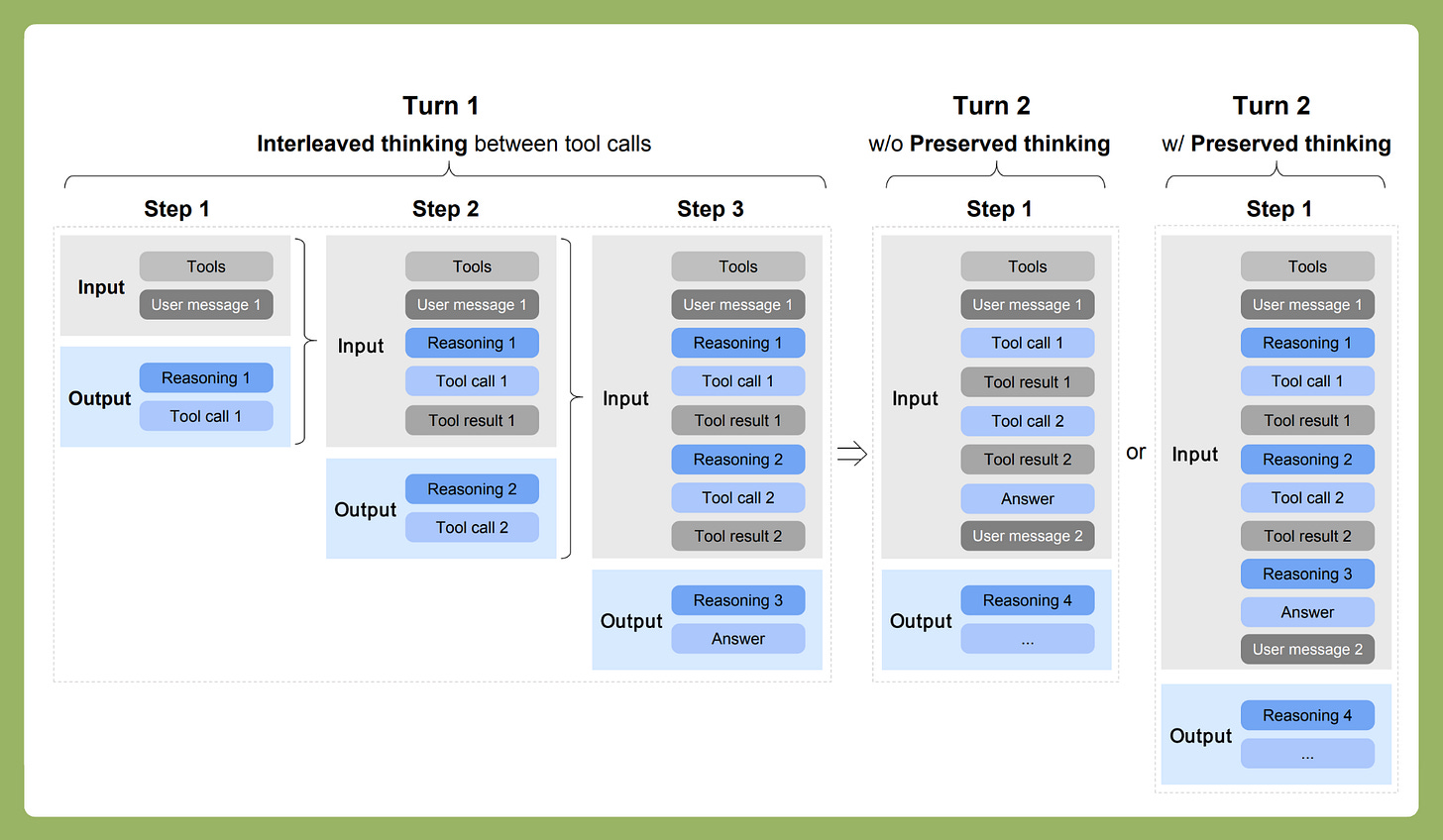

These models generate an internal chain-of-thought before responding, and that chain is itself variable. The GLM-5 technical report makes this explicit. The model shipped a Preserved Thinking mode specifically to retain reasoning context across conversation turns and prevent cross-turn drift.

When model builders start engineering against a failure mode at the architecture level, that failure mode is real.

The question is not whether your AI feature will behave differently over time. The question is whether you designed for it.

The Three Design Failures Teams Make

Failure 1: Hiding Variance Instead of Surfacing It

Teams build UX that treats the AI as deterministic: no regenerate button, no confidence framing, no acknowledgment that the same question might produce a different answer tomorrow.

When variance surfaces, users experience it as a bug and report it as one. Support tickets pile up for behavior that is technically correct. Here, we explained why the same input does not guarantee the same output, and temperature introduces randomness by design.

The product response is not to hide this. It is to design around it. “Here is one way to think about this” frames output differently than “Here is your answer.” A regenerate button signals that trying again is normal, not a sign that something broke. The goal is calibrated trust: not blind trust, not distrust, but calibrated.

Failure 2: Writing Binary Acceptance Criteria

Here is how it usually goes. The PRD says "the AI returns a correct answer." QA runs three test cases, marks them green, and the feature ships. Nobody questions what "correct" actually means, because it felt obvious in the room.

Three weeks later, production surfaces a failure pattern nobody can reproduce, because the test cases were not a “distribution.” They were essentially a demo.

A demo compresses all the variability of production into a single scenario, hiding messy inputs and long-tail formats, and it hides drift, too. Meaning a prompt can look stable on five hand-picked examples, then break on some random day when a new user arrives with a different intent.

The fix is defining success as a rate, not a binary. Instead of “the AI returns a correct answer,” write: “the AI passes this rubric on at least 90 percent of real production inputs.”

Nine out of ten is a target you can measure. It is also a target that can degrade over time, which means you will know when it does.

LLM-as-a-judge, where a model scores outputs against defined criteria for accuracy, relevance, and instruction adherence, is the only evaluation mechanism that scales when there is no single correct output.

Failure 3: Treating Fallback as an Afterthought

The spec says, “display error message if the AI fails,” on a single line, and then moves on.

But failure in a nondeterministic system is rarely binary.

The AI responds. But sometimes it just responds badly. Hidden or silent failures do not crash anything, but they essentially make you lose trust, safety, and budget a little at a time, until users stop believing the feature works at all.

The fix is designing three explicit fallback tiers before the first sprint begins.

Soft fallback delivers a simpler and narrower output at low confidence.

Human handoff routes high-stakes or ambiguous cases to a person. Essentially, think of it as human-in-the-loop.

Silent skip does nothing but do wrong.

The choice between these three is a product decision. It belongs in the PRD.

How to Write a Spec for a Probabilistic Feature

There are three concrete shifts that separate a spec for a deterministic feature from a spec for a probabilistic one. Each shift changes what you ship.

From expected output to acceptance criteria.

The wrong spec line reads: “The AI returns a correct summary.“ The right version reads: “The AI produces a summary that passes the following rubric on 90 percent of a representative input set.“

The difference forces the team to agree on what “good” means before building, not after shipping. Our blog on Prompt Management for Product Leaders makes the point directly: evaluation is the key to iteration, and you cannot iterate toward a target you have not defined.

I would recommend another work of ours, “AI Observability and Evaluations, “which covers how to build a system that makes those improvements trackable.

From test cases to test distributions.

A single test case is a demo.

A distribution is a product.

Effective evaluation starts with roughly 20 representative cases that reflect actual production input. These are not the clean happy path, but messy inputs, edge formats, and ambiguous queries that real users send.

This starting set expands over time using production traces, not gut instinct. The spec should state where the initial eval set comes from before development begins.

From “works” to “fails by design.”

Every AI feature spec should include a Failure Modes section that answers three questions:

What does the feature do when the output confidence is low?

What happens when a tool times out?

What does the user see when the AI produces output outside the acceptable range?

These are product decisions. They belong in the spec, not in a Slack thread three weeks after launch.

If your AI PRD does not have an acceptance threshold section, it is not yet an AI PRD. For a complete structural template, AI PRD guide walks through exactly what that section should contain.

Observability Is the Runtime Layer

Good threshold design requires knowing what the production distribution actually looks like. Traditional monitoring cannot tell you.

Observability vs. Monitoring for Agentic AI documents the issue precisely: status codes, response times, and token counts can all show green while the product is failing. The agent may be retrieving irrelevant content, calling the wrong tool seventeen times, or filling its context window with garbage. None of that surfaces in an infrastructure dashboard.

The design decisions from the previous sections only hold up if the team can see what is happening at the level of reasoning.

Fallback triggers cannot be calibrated without traces that show where and why failures happen. The real value of a proper observability layer is the ability to ask new questions about old data, tracing a bad decision back through every tool call, every retrieval step, and every token that shaped the final output.

The three fallback tiers described above need threshold data to stay correctly calibrated as the feature evolves in production.

That data comes from traces, not from the test suite.

The spec defines what acceptable behavior looks like. Observability tells you whether you are getting it. For the full operational picture on how to instrument this at the agent level, the Observability vs. Monitoring for Agentic AI post is the companion operational read for everything covered in this blog.

A Checklist for Product Leaders

Before you spec:

Have you defined what “acceptable output” looks like as measurable criteria, not as a description?

Have you named the three failure types for this specific feature: output variance, behavioral drift, and reasoning-level failure?

Have you designed all three fallback states: soft fallback, human handoff, and silent skip?

Have you decided which failure modes are acceptable and which are not before the first sprint begins?

Before you ship:

Does your eval set reflect real production inputs, not just the clean demo cases?

Have you run evaluations at the failure boundary, testing what happens when confidence drops or a tool times out?

Is observability instrumented to capture why a decision happened, not just that it happened?

Does QA know that “cannot reproduce” is not a reason to close an AI ticket?

After you ship:

Are behavioral threshold alerts set, not just infrastructure metric alerts?

Is there a post-incident process for AI failures that traces back to the original spec?

Is the eval set growing from production evidence on a defined cadence?

Closing

The teams shipping reliable AI features in 2026 are not the ones with access to better models. Open-source models like Qwen3, GLM-4.5, DeepSeek V3, and Kimi K2.5 have made agents faster, more capable, and so do closed-source models like GPT 5.4, Claude 4.5, Gemini 3, etc.

All of them are suited to longer-horizon tasks than anything available a year ago. Sebastian Raschka’s Big LLM Architecture Comparison documents labs claiming reasoning systems that can sustain autonomous task execution for thirty hours straight.

That is a genuine capability expansion. It does not solve the product design problem. Capability and reliability are different problems, and the industry conflates them constantly. What separates good AI product teams from great ones is not the model they chose. It is whether they wrote a spec for the day the model behaved unexpectedly.