Agent Memory Is A Product Surface, Not Saved Chat History

Production agents need memory rules for what to remember, what to forget, when to retrieve context, and how to debug the decisions memory changes.

TL;DR: Agent memory is not saved in chat history. It is not a longer context window either. It is a product decision, one that most teams are making badly or not at all. This blog breaks down the four scopes of agent memory (user, task, project, and operational), the governance rules every production team needs before shipping, and the six failure modes that occur when those rules are missing. You will also find a practical memory spec checklist and a look at how frontier models like Claude Opus 4.7 and GPT-5.5 are handling — and not handling — the memory problem in 2026.

Agent Memory is a Product Surface, Not Saved Chat History

Coding agents, research agents, customer support agents, operations agents. They are no longer doing one task and stopping. They resume work across sessions, carry decisions forward across tools, and operate inside live workflows with real stakes.

“The context window becomes the new programming surface. You are no longer only writing deterministic instructions for a computer. You are giving context to an intelligent interpreter that can read, reason, call tools, inspect environments, debug errors, and adapt,” — Andrej Karpathy framed the shift precisely in his From Vibe Coding to Agentic Engineering talk.

This essentially changes what memory must do.

When an agent is a one-off assistant, forgetting is acceptable. But when an agent is a participant in ongoing work, forgetting is a bug. But so is remembering the wrong thing.

A stateless agent feels like a tool. A memory-aware agent can feel like a teammate. But an ungoverned memory-aware agent becomes a reliability risk.

If context is your real product, memory is what determines which context your agent carries forward. Getting that wrong is a new category of production failure, and most teams are not yet building defenses against it.

Memory is Not Context

Context is what the model sees right now: the active window, the current prompt, the retrieved documents, and the conversation so far.

Memory is what the system decides should persist later.

Chat history is chronological. It records everything in order. Memory is selective. It stores what was judged worth keeping and retrieves only what is relevant now.

These are different mechanisms serving different purposes, and conflating them is where production problems begin.

A memory system makes active decisions:

What to store and what to discard immediately.

What to retrieve and what to suppress from influencing this response.

What to expire and when.

What to expose to the user versus keep internal.

What to block from the output entirely.

The alternative to selective memory is stuffing everything into context. That does not work in production. The State of AI Agent Memory 2026 report benchmarked this directly on the LOCOMO benchmark: full-context retrieval achieves 72.9% accuracy but requires 17.12 seconds at p95 latency and approximately 26,000 tokens per conversation.

The report is specific about what that means in practice: “a 17-second tail latency means one in twenty users waits 17 seconds for a response, at a token cost roughly 14 times higher than the selective memory approaches.”

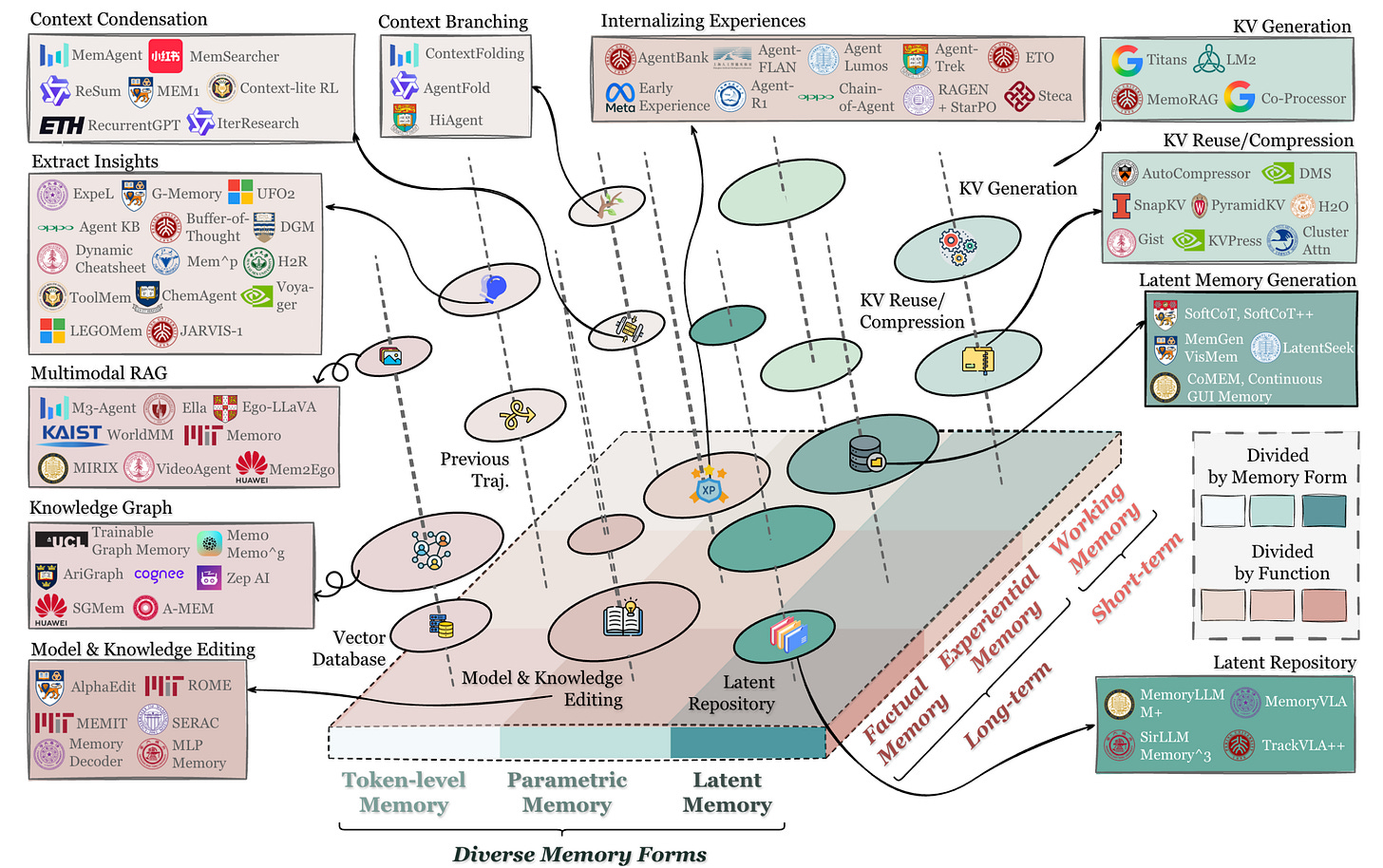

A December 2025 academic survey, “Memory in the Age of AI Agents”, makes this distinction formal. The paper explicitly scopes agent memory as separate from RAG, context engineering, and LLM memory. It argues that existing short/long-term taxonomies “fail to capture contemporary agent memory diversity.”

The authors propose three distinct forms — token-level, parametric, and latent — each serving different functions: factual, experiential, and working memory. Memory is not one mechanism. It is a family of mechanisms, each with different design requirements.

The gap shows up in how the industry defines agents.

In his Agent Engineering piece, swyx critiques OpenAI’s TRIM framework — Tools, Runtime, Instructions, Model — for omitting both memory and planning from its definition of an agent. He contrasted it with Lilian Weng’s own formulation, which includes both.

Frameworks that don’t account for memory produce agents that reset rather than compound. Every session starts from scratch, and every learned constraint must be re-established.

The most direct evidence that context does not replace memory comes from the frontier models themselves. Anthropic released Claude Opus 4.7 on April 16, 2026 — a model with a 1M token context window — and its primary new capability was file-system-based memory. It is the ability to remember notes across long, multi-session work without relying on the context window to hold them.

OpenAI released GPT-5.5 on April 24, 2026, also with a 1M context window. The models include agentic improvements focused on maintaining context within a session. And not across sessions.

Both frontier models, with the largest context windows commercially available, still treat memory and context as separate, unsolved problems.

Context engineering for AI agents is the discipline of deciding what enters the model’s window. Memory is the persistence layer within that discipline. It is not a synonym, but a specific, governable component.

The Four Scopes of AI Agent Memory

Memory is not one thing. Production agents operate across four distinct memory scopes, each with different owners, different retention rules, and different risk profiles.

1. User Memory

What the agent retains about a specific user: preferences, recurring constraints, communication style, and stated goals.

Example: “Prefer concise technical summaries with examples.”

Risk: Overgeneralization. A one-time request becomes a permanent assumption applied to every future interaction.

2. Task Memory

The current objective, previous attempts, blockers, and intermediate state across a working session.

Example: “The previous implementation failed because the auth fixture was stale.”

Risk: Carrying a failed approach into a new session without flagging it as resolved or explicitly abandoned.

3. Project Memory

Architecture decisions, repository conventions, customer constraints, and product assumptions that apply across all tasks in a project.

Example: “This product does not allow new dependencies without approval.”

Risk: Stale project memory. Decisions that were correct six months ago and have since changed remain in the agent’s working context, applied with the same confidence as when they were written.

One approach to structuring project memory: in his llm-wiki gist, Karpathy proposes a three-layer architecture where agents maintain a wiki — LLM-generated markdown files serving as structured summaries, entity pages, and concept pages that the agent owns and updates over time. Agents perform three operations on it:

Ingest new decisions and documents as they arrive.

Query the wiki before acting, rather than re-deriving from raw sources.

Lint it periodically to remove contradictions, stale claims, and orphaned entries.

Karpathy’s framing: “the wiki is a persistent, compounding artifact.” Knowledge is built once and kept current — cross-references already exist, contradictions have already been flagged — rather than re-derived from scratch each session. That is what project memory should be.

4. Operational Memory

Tool calls, approvals, failures, eval outcomes, rollbacks, and deployment state. The audit trail of what the agent actually did and what happened as a result.

Example: “The last deployment was rolled back because latency crossed the threshold.”

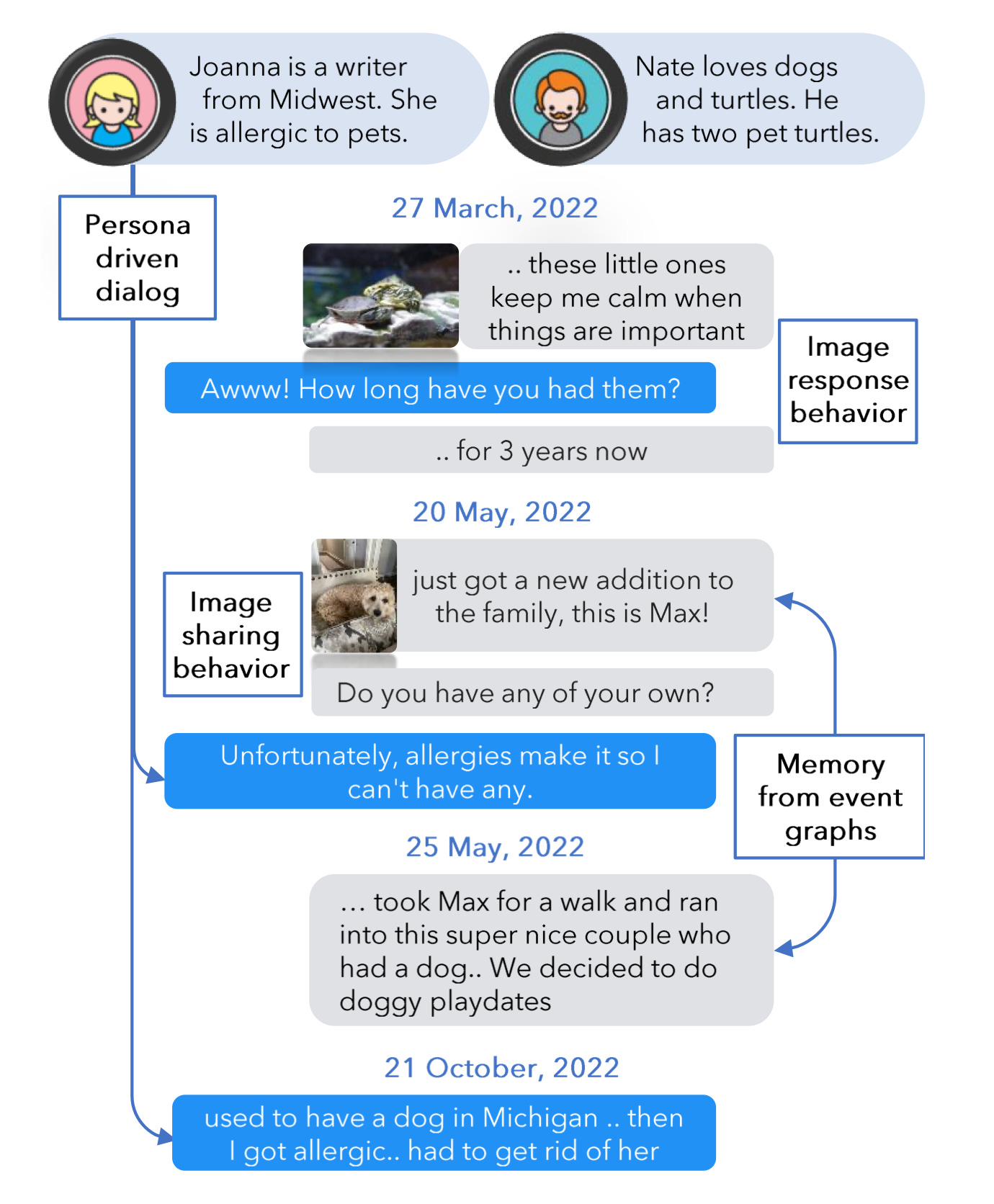

Risk: Actor confusion in multi-agent systems. The State of AI Agent Memory 2026 report describes this failure mode directly: “avoiding situations where one agent’s inference gets treated as ground truth by another agent downstream.”

Actor-aware memory architectures address this by tagging each memory with its source, so downstream agents know whether a memory came from a user statement, another agent’s inference, or an intermediate step.

Understanding these scopes is foundational to agentic AI workflows that carry useful state across time rather than resetting on every session. It is also the starting point for persistent state in agent architecture: each scope requires different storage, access rules, and expiry logic.

What Agents Should Remember, Forget, and Never Store

Memory is a product decision before it is a storage decision. Three categories govern what a production agent may retain.

Remember: The agent must remember stable information that improves continuity:

User preferences and communication style.

Project conventions and architecture decisions.

Approved decisions and stated constraints.

Recurring workflow patterns and their outcomes.

Known failure patterns and how they were resolved.

These are the core pieces of information that might not change for a season, such as for a project duration or brand voicing.

Forget: This refers to temporary or outdated information:

One-off instructions that applied to a single session.

Stale product decisions that have since changed.

Temporary debugging paths that were resolved.

Outdated evaluation results.

Old customer context after an account transition.

Never Store: These are sensitive or unsafe information:

Credentials and secrets.

Private customer data outside the approved scope.

Sensitive personal data unless explicitly required and governed.

Unsupported inferences about the user’s identity or intent.

Every memory type needs an owner, a scope, an expiry rule, and a deletion path. Without those four things, memory accumulates without governance. The State of AI Agent Memory 2026 report is direct on this under its Open Problems section: “user-level memories require consent and governance. What exactly that governance looks like...is currently an application-layer concern.”

Product teams must define this themselves rather than wait for the infrastructure layer to enforce it.

The multi-agent product control plane is where these rules live in practice. This includes who can read a memory, who can edit it, which agents can access which scopes, and what happens when memory crosses workspace or tenant boundaries.

How Agent Memory Fails in Production

Six failure modes, each distinct, each harder to debug than a stateless agent.

Stale Memory

The agent applies an old decision after the team changed direction. The memory is still highly relevant, so the agent uses it with confidence.

The issue with stale memory is that it produces “confidently wrong” outputs. High relevance combined with incorrect information is worse than irrelevance, because it does not signal uncertainty.

Overgeneralized Memory

A one-time instruction (”skip the validation step for this session”) gets stored as a permanent preference and applied to every subsequent task.

Wrong-Scope Memory

Context from one user, customer, repository, or workspace leaks into another. In multi-agent systems, this is the actor-aware failure: one agent’s inference contaminates downstream agents that have no way to verify the source or the confidence level behind it.

Memory Conflict

Stored memory contradicts the current user instruction. Without explicit conflict-resolution rules, the agent must choose, and it may choose incorrectly without surfacing the conflict to the user.

Hidden Influence

The user receives a response shaped by a retrieved memory but has no visibility into which memory fired, when it was written, or why it was retrieved. The output is unexplainable.

Bad Retrieval

The correct memory exists. The agent retrieves the wrong one or misses it entirely. In “Agents that remember: introducing Agent Memory”, the authors describe running five parallel retrieval methods: full-text, exact key lookup, raw message search, direct vectors, and HyDE vectors. Results are fused through Reciprocal Rank Fusion with weighted scoring. The reason they built it this way: “no single retrieval method works best for all queries, so we run several methods in parallel and fuse the results.”

Bad retrieval is a system design problem. It is not a model problem.

Stale memory is also a specific, application-level instance of context rot. Here, the degradation of context quality over time as information goes stale or contradictory. The fix is the same in both cases, i.e., active expiry rules and freshness checks, not passive accumulation.

Retrieval failure is particularly difficult to diagnose without visibility into how embeddings for AI agents are used in semantic lookup. When a retrieval returns a plausible but wrong memory, the model treats it as a signal. The resulting error traces back to the retrieval layer, not the generation layer.

Memory Needs Evals and Observability

You cannot treat memory as a database feature. A correct write and a successful retrieval do not mean the memory-influenced behavior is correct. You have to evaluate the behavior memory creates, not just the memory itself.

Useful eval questions:

Did the agent retrieve the right memory for this task?

Did it correctly ignore irrelevant stored memory?

Did it prioritize the current instruction over an older stored preference when they conflicted?

Did it avoid expired or out-of-scope memory?

Did memory improve task completion, or introduce errors?

Did memory increase latency or token cost meaningfully?

Did the user correct or override a memory-influenced output? (That correction is a signal worth capturing.)

Required logs per memory event:

Memory ID and type.

Memory scope: user, task, project, or operational.

Creation source: Which agent, session, or user action created it?

Last updated timestamp.

Retrieval trigger and confidence score.

Did this memory influence the final output?

Downstream tool calls are affected by this memory.

The LOCOMO benchmark evaluates memory across accuracy, token consumption, and latency together, not just recall. That multi-axis framing is the right model for production evals. Optimizing for accuracy alone, while missing latency, is how you ship something that passes tests but breaks under real usage.

The same principle applies to compaction. Claude Opus 4.7 introduced compaction — server-side summarization that automatically condenses earlier conversation turns to extend long-running agents beyond context limits.

Compaction is itself a form of selective memory. Here, the system decides what to summarize, what to drop, and what to preserve across a session boundary. That decision needs evaluation, too. A compaction step that summarizes incorrectly or drops the wrong operational state can corrupt an agent’s working context without surfacing any visible error. The eval question is the same:

What did the system preserve?

What did it discard?

Did agent behavior degrade afterward?

Evaluating AI agents in production already requires traces across tools, prompts, and outputs. Memory adds a new layer to that trace.

The question is whether your observability stack can surface which memory fired, when it was created, and how it shaped the output — or whether debugging a memory-influenced failure means guessing.

Platforms like Adaline are built to expose that layer, so teams can trace and correct memory behavior without having to reconstruct it from logs after the fact.

A Practical Memory Spec For Product Teams

Before shipping any memory capability, a product or engineering team should be able to answer every one of these:

What should the agent remember?

What should it forget?

What should it never store?

Is memory scoped to the user, task, project, workspace, or organization?

When does each memory type expire?

Who can inspect, edit, or delete stored memory?

What happens when stored memory conflicts with the current prompt?

Which evals must pass before memory is enabled in production?

What logs are required to trace and debug memory-influenced outputs?

If any of those questions are unanswered, memory is not a feature. It is a liability that has not materialized yet.

The production-ready agent does not remember everything. It remembers the right thing, at the right time, for the right reason.